If only, if only, my colleagues say, if only the news media had started charging for content when they launched their first websites.

If only the media had charged, then none of the current problems of free content would have happened, the public would know that content costs money and the newspapers and TV stations would have a second, strong income stream and all would be well. There would be lots of good, high paying jobs and the money to do real journalism rather than celebrity silliness.

If only……

So now there is a search for scapegoats. Media managers who have shown that they are completely incompetent in running traditional print and broadcast are an easy and obvious target.

Others blame the tech community and a misunderstanding of the truncated quoting of Stewart Brand, “information wants to be free.”

Then came Wired editor Chris Anderson’s nasty tract, Free. The main flaw in “free” is the assumption that the concept can transfer outside the tech and science fiction communities. Unlike commodity (or atom) based corporations, for creative individuals and most of the media, “Free” usually doesn’t work outside those arenas, an inconvenience that the advocates of “free” constantly ignore. What is left is basically a schoolyard bully taunt: “So there, free is the future, so take your medicine and work for nothing.”

Most of the people who ask the question and provide answers were not around in the earliest days of online news media. So that is why there is a belief that if somehow the media had charged in the early days, today all would be well.

Yes, there was one day and just one day, when, if the media had got its act together, it could have started charging for online news, September 1, 1993. The trouble was that there were no major media on the Internet in a big way come that September.

I was present at the creation of online media

I was working in “online media” long before the launch of the World Wide Web, back in the days of videotex and teletext from 1981-1985.

The Internet played a role in my science fiction short story Wait Till Next Year, published in Analog in November, 1988 (although I got some of the tech details wrong).

I got my first Internet account in August 1993. Note I am a very early adopter and got in just before the Internet tsunami a month later in September 1993.

I co-wrote the first book on Researching on the Internet, published in the fall of 1995. So I was researching the state of the internet, the web, and the media at the first moments of news on the web.

I was the third employee assigned to CBC News Online, April 1, 1996.

The cold, hard fact is that web evolved with free content. It had little to do with Stewart Brand. So when the media first ventured onto the web, the media had to play by the rules at the time. Those rules appeared to say, “commerce on the Internet is a no- no.”

The Genesis of the media on the Internet

In the beginning, (in 1968-1969) US Department of Defence created ARPANET.

And DOD saw that it was good.

DOD said let the military and the scientists communicate.

And the military and the scientists communicated.

And DOD saw that it was good. The American was getting a good return for their money.

But then there was darkness on the face of ARPANET,

DOD saw that too many people had access to the ARPANET and most of the users didn’t have security clearances.

DOD said in 1983, we will create a separate MILNET and give the scholars ARPANET

In 1984, the National Science Foundation created NSFNET.

And DOD and NSF saw that it was good.

Thus TCP/IP spread to universities around the world.

And the scholars saw that it was good.

The techs improved a system called UUCP and created protocols for e-mail, ftp and newsgroups.

And the techs saw that UUCP was good and said GNU, thus, this protocol shall be free to all.

The campus deans said let us have more access to ARPANET, NSFNET,TCP/IP and UUCP NET via private sector telecoms who can do the wiring.

Verily the private sector telecoms wired the universities and the laboratories and created dial up for scholars in their homes.

The telecoms reaped great profits of gold and silver and precious things from those wires.

And DOD and NSF and the scholars and the techs and the telecoms saw that it was good.

NSF decreed that NSFNET and ARAPNET shall be free from commerce, for it was the will of the community that the networks are for education and the spread of human knowledge.

And so NSF said we shall cast out UUCP NET because it can be used for commerce (but we will still use the free software they developed).

And thus UUCP NET was cast out.

The telecoms and the nations of the world far from North America agreed that this networked system was good and created their own networks.

And they all saw that it was good.

Thus it came to pass that the universities which had journalism schools gave their students access to what was now known as the Internet.

And lo and behold it appeared to be free (although their accounts were paid for, in part, by tuition fees). The students were taught that the Internet was educational and thus should be free for all.

At the same time their elders in journalism who loved tech were using another system called CompuServe (which the elders had to pay for with their credit cards).

The journalism students and j-professors came on to CompuServe said “Behold I bring you tidings of great joy. There is this wonderful thing called the Internet and it is free.”

It came to pass that Tim Berners-Lee at CERN created the World Wide Web.

And all saw that the World Wide Web was good.

So the professors, and the students and the reporters and the editors, all of whom loved tech, all rejoiced when they saw the World Wide Web. For they thought they had found the perfect way to deliver the news.

Out of a whirlwind came Netscape.

At first only the techies loved Netscape.

Then Netscape said we shalt have an IPO.

In the year of our Lord 1995, on the ninth day of August, the IPO came to pass, and it was wonderful and the Netscape stock set a record on Wall Street.

So Netscape became front page news and was high on the evening newscasts.

The media barons and all priests and scribes of the news temples saw that much gold and silver was going to Netscape and asked “What is going on?”

So the barons and the priests and the scribes summoned those of their followers who were techies and said “Tell us, what is this Internet? What is this World Wide Web? Why is Wall Street giving gold and silver and precious things to Netscape?”

The techie reporters and editors said to the barons, the priests and the scribes, this is the Internet, this is the Web.

The techie followers showed the barons, the priests and the scribes their personal websites. Thetechie editors showed the barons, priests and scribes the under the table news sites they had created. They told the exalted ones this World Wide Web is perfect for delivering news, you can have text, you can have pictures, you can have audio and you can even have video.

The barons and the priests and scribes decreed to their techie followers and editors. “Thou shalt build websites for our news operations.”

So the techie news people and the tech techies laboured mightily and created websites. They presented the websites to the barons, priests and scribes.

The barons, priests and scribes looked at the websites and saw that they were good. So they told the news people and techies that they had done a great service and would be rewarded from the gold and silver we get from this new World Wide Web (although the barons, scribes and priests, like all their kind, were lying and did not intend to really reward their followers).

The techie news people and the tech techies trembled and quaked but bravely told the barons, priests and scribes, “No, oh exalted ones, that is forbidden. It has been decreed from on high that there will be no commerce on the Internet.” And they were sore afraid.

The barons, priests and scribes said to themselves, “What the fuck is going on?”

So that’s the story.

.

From creation to evolution

There are two key points.

First, as is well known, the Internet did evolve from military, scientific and university communications systems which were, on the surface, free, although, of course, largely paid for by the American taxpayer and university endowments

The culture of free exchange of information is the basis of scholarship, but is, of course, paid for behind the scenes, by government, foundation and endowment funding. Thus the culture of freeinformation existed at the core of Internet use at the time the media first began to be interested in putting news on the web.

Second, in the early 1990s, before the rise of the independent Internet Service Providers and the expansion of services by the telecoms, large and small, the main communication network for the Internet in North America was the NSF Backbone, the high speed Internet communications network run by the U.S. National Science Foundation, which as part of its policy, forbade the use of the backbone for commercial purposes.

Thus in theory, and the conventional wisdom believed, no one using the Internet for commercial purposes, and that would have included charging for news, could use the main North American Internet information communications backbone.

But, in reality, the situation was a lot greyer and not so black and white.

I kept all my research material from the time in 1993-1994 (which I recently donated to the York University Computer Museum)when I was writing Researching on the Internet.

Here is what a couple of the leading books of the time said (books which most libraries, I suspect, discarded long ago and so are no longer available to those who lament the media if only)

Internet Companion A beginners guide to global networking Tracy LaQuey with Jeanne C Ryer, Addison Wesley, May 1993, put it this way:

Probably the best known and most widely applied is NSFNETs Acceptable Use Policy , which basically states the transmission of “commercial” information or traffic is not allowed across the NSFNET backbone, whereas all information in support of academic and research activities is acceptable.

It sounds somewhat complicated, but you need to remember the original Internet began as US government‑funded experiment and no one expected it to become the widespread, heavily used production network it is today.

It’s going to take a while for commercialization and privatization of these networks to occur. The Internet as whole continues to move to support‑‑or at least allow access to‑‑more and more commercial activity. We may have to deal with some conflicting policies while the process evolves, but at some point in the Internet future, free enterprise will likely prevail and commercial activity will have a defined place, making the whole issue moot, In the meantime, if you’re planning to use the Internet for commercial reasons, make sure the networks you’re using support your kind of activity.

Another book, just a little later, Kevin M Savetz Your Internet Consultant The FAQs of Life Online. Sams, 1994

Commercial activity isn’t allowed on the Internet? It’s purely an academic and educational network, right?

People who advertise and sell stuff on the net should be flogged, right?

Yes and no. As mentioned earlier in this book the Internet is composed of a variety of different networks. Each network has its own set of rules, called acceptable use policies.

Certain networks [particularly the National Science Foundation network, the NSFnet, have strict acceptable use policies that ban most types of commercial use.

On the other hand, another backbone network within the Internet world has been finding considerable interest among commercial internet users‑‑the Commercial Internet Exchange (CIX). The acceptable use policies of CIX are much more broad and advertising and selling are both within its purview. So although commercial activity isn’t allowed on certain parts of the Internet, it is allowed on others.

People who advertise on the Internet should only be flogged for heinous violations of Internet culture, such as sending unsolicited junk e‑mail or posting commercial messages to Usenet groups that aren’t supposed to be used for commercial messages.

In the same book, another writer, Michael Strangelove, answered the question (key for the media in retrospect and somewhat prescient as well)

Is advertising allowed on the Internet?

…many people see internet as a noncommercial, academic, purely technical environment. Not so: today about fifty per cent of the Internet is populated by commercial users, The commercial Internet is the fastest growing part of cyberspace,

Businesses are discovering that they can advertise to the Internet community at a fraction of the cost of traditional methods. With tens of millions of electronic mail users out there in cyberspace today . Internet advertising is an intriguing opportunity not be overlooked. When the turn of the century rolls around and there are one hundred million consumers on the Internet, we may see many ad agencies and advertising supported magazines go under as businesses learn to communicate directly with consumers in cyberspace.

Those were print books aimed at the newbie Internet user.

But it also means that if the media had had the foresight to get on the Internet in the earliest years of the 1990s, they would have had to become part of the proposed Commercial Internet Exchange.

But in 1991, 92, 93, online in a newsroom was confined to what was called in many American (and Canadian) newsrooms, the “geek in the corner.”

The situation was already changing even as those books went to press.

Here is how Wikipedia explained the changes.

The interest in commercial use of the Internet became a hotly debated topic. Although commercial use was forbidden, the exact definition of commercial use could be unclear and subjective. UUCPNet and the X.25 IPSS had no such restrictions, which would eventually see the official barring of UUCPNet use of ARPANET and NSFNet connections. Some UUCP links still remained connecting to these networks however, as administrators cast a blind eye to their operation….

In 1992, Congress allowed commercial activity on NSFNet with the Scientific and Advanced-Technology Act, 42 U.S.C. § 1862(g), permitting NSFNet to interconnect with commercial networks.[31] This caused controversy amongst university users, who were outraged at the idea of noneducational use of their network

So, the US Congress had opened up the Internet to commercial activities in that country.

The geeks, bearing content

Most of the media was still clueless and didn’t jump to the opportunity, even if they ran Sunday feature stories on the geeks or closing items on the evening news about this thing called “The Internet.”

It is likely that the vast majority of executives with their eyes on Wall Street and paying consultants pushing 1970s media models had no idea that they employed a “geek in the corner,” much less what the geek was doing.

Apart from tech companies, both hardware and software’s growing giants plus the small office start ups and computer science grad students with big ideas, which made up most of Strangelove’s “commercial activity,” the private sector around the world was slow to take up the challenge.

The CBC, as Canada’s public broadcaster, had, at least in those days, a mandate to experiment and innovate. So in 1993, CBC began an experiment working toward streaming radio on the Internet, in cooperation with the Communications Research Council. But as an experiment and coming from a public broadcaster there was no thought of charging for the service. (The history of the early days of CBC.ca shows the kinds of problems that executives faced. And it was a lot harder for the private sector which was expected to make money and even harder now in the era of bean counting consultants and their talk of profit centers).

When business executives finally realized that the Internet was open to commerce, the news media was one of the first industries to make a major effort to invest on posting their material, most of it repurposed on the World Wide Web. The move was most often driven by those managers and employees who were still around from the videotex and teletext days, who saw web based news might succeed where the 1980s projects failed. Usually, these experiments were not sanctioned by head office and the money came from a little creative budgeting.

That meant the content had to be free, right from the beginning.

There’s one factor, that today’s audience metrics obsessed media bean counters have never considered when they say “If only. ” Their all important audience. The audience for online news in the mid-1990s were Internet and Web early adopters and most had adopted the culture of free information. In those early days, no media was willing to make an investment in online content that was actually worth paying for. Most of the news was repurposed from existing print, radio or television, which was readily available (for a price, of course)

So when the first media pioneers ventured on to the Internet in the mid-1990s (including CNN, NBC, the CBC where I worked, the Raleigh News and Observer, The Globe and Mail and The Toronto Star and others) the media was caught in an evolutionary feedback mechanism.

To attract the early adopter audience, the news had to be free. The audience that might have paid was not yet online (although the richer business types were using proprietary electronic services–which meant they didn’t need to pay for web content either. That pre-web willingness to pay for business information is why the Wall Street Journal paywall has worked while others failed).

Where was the money to come from? The early click through rates for the first banner ads (which many in the audience actually objected to) were dismal.

Development of good websites cost time and money and the media was already facing the culture of free. (I predicted trouble for newspapers when I was interviewed by Craig Saila for the Ryerson Review of Journalism in fall 1996, an article published in spring 1997 www.clueless.@nd.hopeless.ca (Registration required) (Also available on Craig Saila’s site)

The headline pretty much sums up the attitude the students of the time had to media management which was failing to adapt to the fast changing environment.

Looks like the students were right. The Ryerson article was just about the media that had had the courage to venture on to the web by the fall of 1996.

Most of the news media were late comers and took almost a decade to catch up in page views with the early starters. The late comers couldn’t charge for their content because 95% of those early online services, their competitors, were free. Neither were putting that much money into real web content.

If only

There was one day that all the media could have made sure they could charge for content. September 1, 1993.

For it was in September 1993 that the Internet (not yet the web) took an evolutionary leap from a government, military and academic information network and communication system to one used by the public.

In September 1993, America Online, then the largest paid service, opened a gateway to Usenet, the “newsgroups” of the Internet for its subscribers. It was a time for those who then thought the Internet was their exclusive domain remember with horror, called by some the tsunami or the beginning, as described by Wikipedia as the “Eternal September,” when their private party ended.

Yes there were a few news organizations with a presence on CompuServe or America Online on September 1, 1993 but far too few and the content was far too thin.

If the media wanted to charge for content, after September 1993, when the thousands of AOL subscribers ventured on to the adolescent Internet of the time and embraced the culture where they expected free content, it was already too late.

A tectonic collision occurred that September, the leading edge of one continent collided with another.

Invasive species penetrated the long balanced media ecosystem and disrupted it beyond imagination. So will evolutionary forces work, will the news media adapt to the new environment?

Related

Thirty Years in New Media

Thirty Years in New Media Part II The Veteran Strikes Back

A combination of photo crowd sourcing and social networking.

A combination of photo crowd sourcing and social networking.

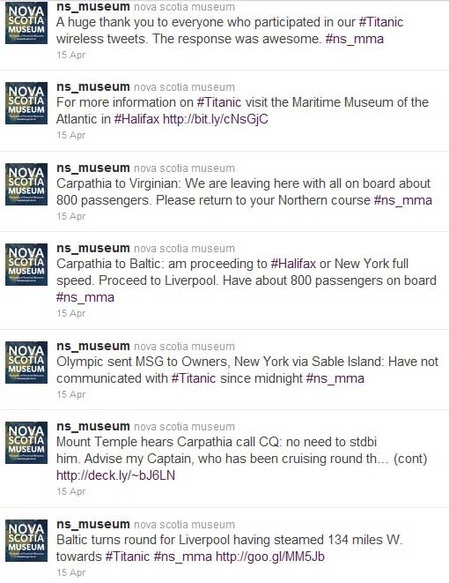

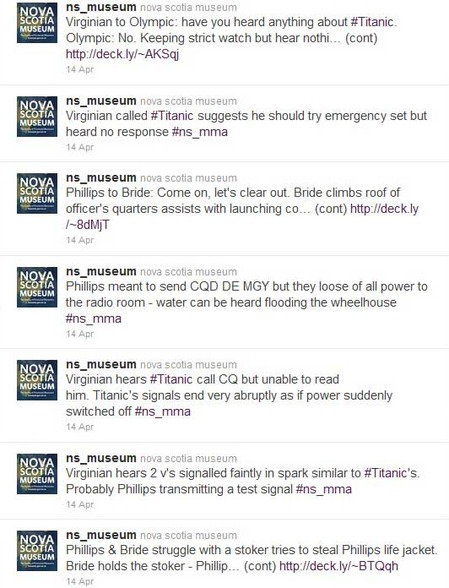

One last note, During the CBC lockout, I wrote a blog about the Titanic’s musicians and how badly they were treated by the White Star Line,. See

One last note, During the CBC lockout, I wrote a blog about the Titanic’s musicians and how badly they were treated by the White Star Line,. See

desperate media companies offers they cannot refuse, demanding that they charge for content on the Ipad so Apple can get its 30 per cent cut, content that Apple says it can censor at will. Of course, there were dozens of tablets at the 2011 Consumer Electronics Show, but the question is how many of those tablets will survive the evolutionary competition and whether or not one tablet succeeds by giving the media companies a way of saying no to the godfather from Apple.

desperate media companies offers they cannot refuse, demanding that they charge for content on the Ipad so Apple can get its 30 per cent cut, content that Apple says it can censor at will. Of course, there were dozens of tablets at the 2011 Consumer Electronics Show, but the question is how many of those tablets will survive the evolutionary competition and whether or not one tablet succeeds by giving the media companies a way of saying no to the godfather from Apple. In physics,

In physics,  system, was already working on the development of the

system, was already working on the development of the

.

.

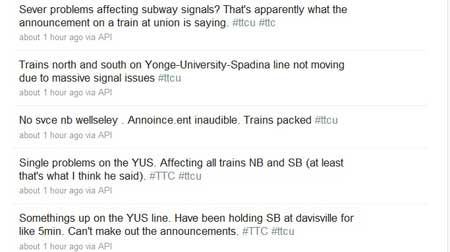

Some corporations never learn. Now we see overuse of the Twitter alert for routine news stories, even when the same news organization has Twitter accounts for the routine. That overuse only diminishes the brand and all the public has to is unfollow the overused alert.

Some corporations never learn. Now we see overuse of the Twitter alert for routine news stories, even when the same news organization has Twitter accounts for the routine. That overuse only diminishes the brand and all the public has to is unfollow the overused alert. One aggressive invasive species is Wikileaks. Wikileaks enters that investigative niche largely abandoned by the increasingly too specialized apex media species. Like other invasive species, Wikileaks, also disrupts the ecosystem. Wikileaks is not the same kind of species Again imagine a tall and solid investigative fir tree, now old and rotten. Wikileaks, perhaps, it is too early too tell, is the media ecosystem equivalent of kudzu or purple loosestrife that fills the place emptied by that fallen tree.

One aggressive invasive species is Wikileaks. Wikileaks enters that investigative niche largely abandoned by the increasingly too specialized apex media species. Like other invasive species, Wikileaks, also disrupts the ecosystem. Wikileaks is not the same kind of species Again imagine a tall and solid investigative fir tree, now old and rotten. Wikileaks, perhaps, it is too early too tell, is the media ecosystem equivalent of kudzu or purple loosestrife that fills the place emptied by that fallen tree.

time again by know nothing managers to attend a session with an expensive consultant only to find out that our staff usually knew more than the consultant. In 90 per cent of cases, consultants are a waste of time and money. In ecosystem terms, consultants are like epiphytes, air plants, that look good, often with pretty flowers, on a tree branch or trunk but are essentially parasites, living off the tree itself. If you want your staff to listen to the latest guru, pay for them to attend a conference where they can get the same canned speech at a much lower cost, and may find an even better idea in a small seminar or a corner booth.

time again by know nothing managers to attend a session with an expensive consultant only to find out that our staff usually knew more than the consultant. In 90 per cent of cases, consultants are a waste of time and money. In ecosystem terms, consultants are like epiphytes, air plants, that look good, often with pretty flowers, on a tree branch or trunk but are essentially parasites, living off the tree itself. If you want your staff to listen to the latest guru, pay for them to attend a conference where they can get the same canned speech at a much lower cost, and may find an even better idea in a small seminar or a corner booth.

.

.

TTC Communications chief Brad Ross @bradttc, also tweets, including updates on service interruptions (and gets a growing number of service complaints.). But those tweets are few and often late.

TTC Communications chief Brad Ross @bradttc, also tweets, including updates on service interruptions (and gets a growing number of service complaints.). But those tweets are few and often late.

It was clear that going home by subway wasn’t an option, so I left that station and headed a few blocks south on Yonge St. to grab a streetcar, my Treo tweeting all the way down. The customers who were able to tweet apparently getting their information from the drivers rather than the public system.

It was clear that going home by subway wasn’t an option, so I left that station and headed a few blocks south on Yonge St. to grab a streetcar, my Treo tweeting all the way down. The customers who were able to tweet apparently getting their information from the drivers rather than the public system.